Halcyon Architecture - "Director's Cut"

During GDC 2018, SEED unveiled our real-time hybrid ray tracing experiment titled “PICA PICA”, and there have been a number of great presentations from colleagues about our work on this project, primarily from the standpoint of image quality.

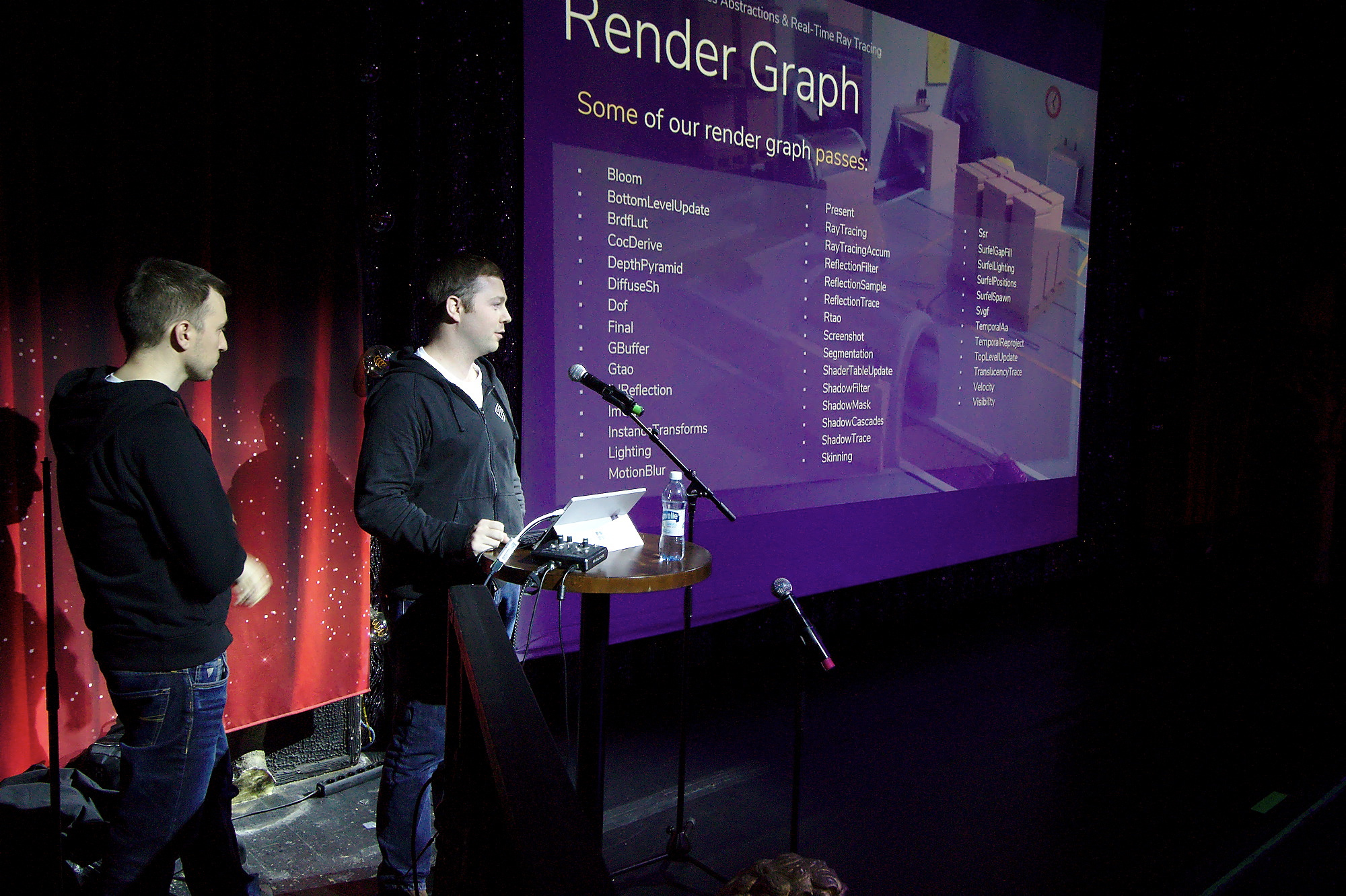

In some of these talks, there were some brief references to our R&D framework titled “Halcyon”, but very few details were released about what Halcyon is, and how it is designed. We wanted to get more information out around Halcyon itself, so I did two presentations recently about the current rendering architecture and capabilities of Halcyon.

The first presentation was delivered in October 2018 at the Khronos Munich Meetup, and this session focused on general abstractions, and how some of these abstractions map over to the Vulkan API. Presentation - thanks for the invite, Matthäus Chajdas!

The second presentation was delivered in November 2018 at SyysGraph in Helsinki, and this session focused further on abstractions, including our explicit heterogeneous multi-GPU scheduling, remote render proxy, and deep learning inference. Presentation - thanks for the invite, Jaakko Lehtinen!

A more relaxed and free-form version of this content was given as a lecture at Aalto University the following day.

I wanted to merge a lot of the currently public content into a form of “Director’s Cut” version, where a single slide deck could be referenced, instead of a multitude of presentations each focusing on different aspects. Going forward, any new content will be merged into this slide deck, making it more of a living body of work rather than an immutable snapshot in time.

Enjoy!